This week in AI: what changed and why it matters

Agent features landed where work already happens, like Excel and Word, so multi‑step tasks such as spreadsheet cleanup and draft reports now take fewer handoffs and less time to supervise. That’s a clear win for marketers, analysts, and creators who need repeatable results without living in macros or custom scripts.

Multimodal “all‑in‑one” pipelines are increasingly the default pitch: text in, images and audio too, and a single output that can generate summaries, highlights, captions, or rough cuts, which reduces app‑hopping and context loss. For content teams and ops leaders, that means faster turnarounds and fewer format headaches.

A quick pulse check: the broader signal

Weekly roundups show a pattern: agent reliability is inching forward, adoption is moving from pilots to production, and policy is getting sharper teeth, especially around safety reporting and transparency. It’s not hype to say workflows are consolidating around agents plus multimodal utilities that fit into existing stacks.

If there’s a single takeaway: standardise one agent in a core process and one multimodal routine that cuts editing time by at least 20 per cent, then measure and expand. Momentum favors teams that ship small wins weekly.

What this really means for work

- Less glue work: Agents now handle the grunt steps in spreadsheets and docs that usually soak up mornings, like cleaning data, building pivot summaries, and drafting outlines. That’s time back for analysis and creative choices.

- Fewer tool switches: A single multimodal run can transcribe a meeting, detect highlights, write captions, and prep vertical formats, which keeps context tight and reduces errors from copy‑paste gymnastics.

What shipped: highlights worth caring about

- Microsoft’s Agent Mode inside Excel and Word and an Office Agent in Copilot chat promise real multi‑step automation of data, reports, and decks, with iterative, dialog‑driven refinement and stronger accuracy on complex tasks. Translation: less macro hunting, more done‑for‑you scaffolding.

- California passed a first‑of‑its‑kind AI safety transparency law requiring companies to report incidents and publish safeguards, which means enterprise‑grade buyers will push vendors for clearer disclosures and better defaults. Expect ripple effects in procurement and compliance reviews.

The agent reality check: adoption and maturity

Executives and mid‑market teams are steadily moving agents from experiments to production, and industry trackers point to measurable impacts in regulated settings and high‑volume operations. Early adopters report faster cycle times, lower data costs, and better campaign velocity when agent tasks are scoped tightly and logged.

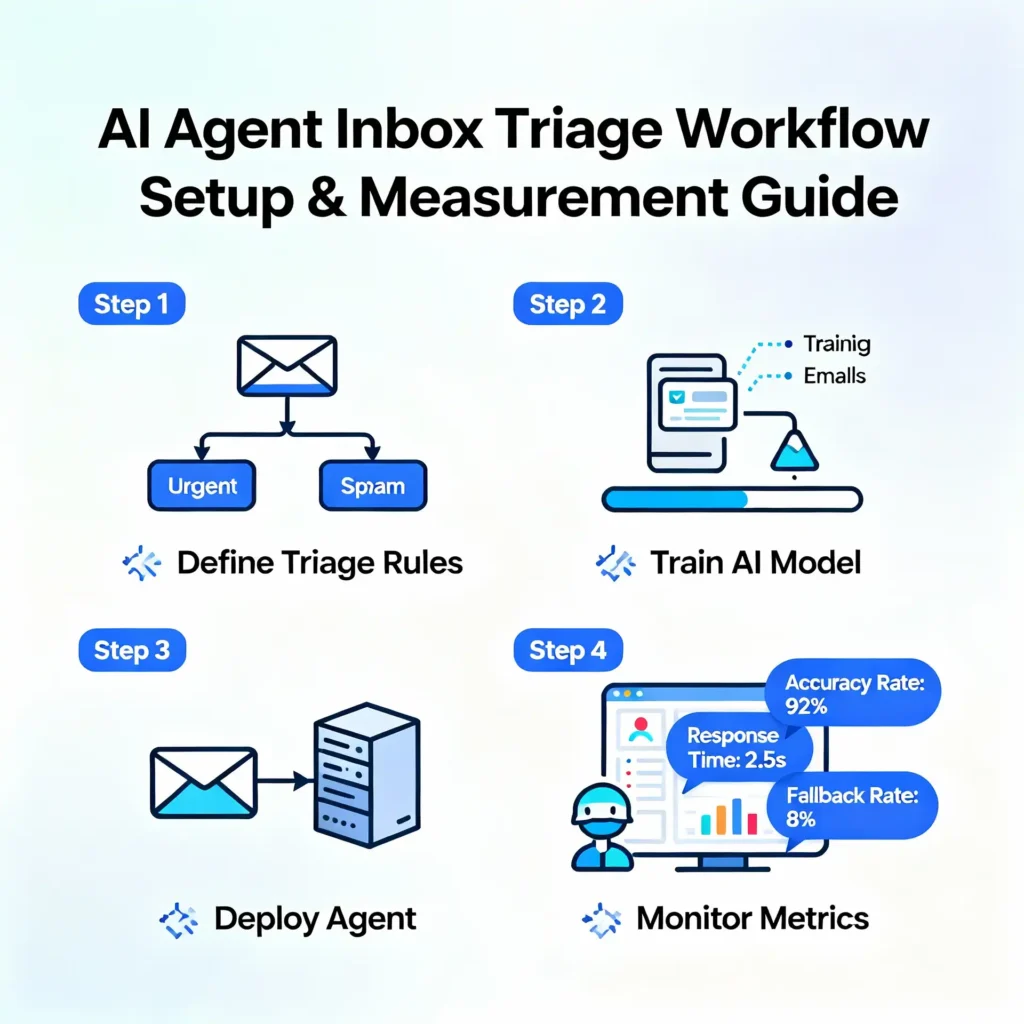

The practical advice: start with reliably scoped jobs—reporting, inbox triage, knowledge lookups—before letting agents touch customer‑visible posts, legal text, or irreversible data actions. Guardrails first, scale second.

Multimodal goes default: why that’s a big deal

Unified pipelines handling text, visuals, and audio in one flow are moving from promise to platform category: real‑time analysis, no‑code agent studios, compliance‑first variants, education suites, and dev‑centric copilots. That breadth matters because it means there’s likely a lane that fits each team without custom engineering.

For creators, multimodal chops mean meeting‑to‑article content is finally sane: transcribe, highlight, caption, thumbnail prompt, export, done. That consolidates a messy stack into a routine that a single person can run in minutes, not hours.

Action plan: ship two wins this week

- Inbox triage agent: route messages, generate draft replies, and label intent. Measure response time and error rate over 3 days to confirm value. If accuracy is stable, expand to templates.

- Meeting‑to‑clips workflow: record, transcribe, auto‑detect highlights, generate captions, and export verticals for Shorts/Reels; quality‑control before publishing. Track retention at 30 seconds and clickthrough to the long article.

Field notes: where agents shine right now

- Spreadsheet cleanups: type normalization, outlier flags, pivot summaries, and chart drafts. The agent asks clarifying questions and iterates without code.

- Report scaffolds: agents that turn bullet notes and data into readable drafts with section headers and references, ready for a human finish.

Field notes: where multimodal saves hours

- Research debriefs: paste screenshots and notes, attach audio, and get a structured summary with action items and a rough article outline. That’s better than juggling three different apps.

- Social repackaging: take a long meeting or webinar and compress to 60-second shorts and a carousel, with captions and hook variations generated in one pass.

Risks and how to reduce them

- Hallucinations in agent output: keep agents on a narrow leash with explicit steps, fixed data sources, and human approvals for any publish or send step. This cuts visible errors.

- Policy shifts: transparency mandates make vendor selection more complex, but also safer; ask for incident reporting and safeguards in writing before committing.

Benchmarks and momentum: adoption and impact

Recent roundups highlight enterprise progress: time‑to‑market improvements of 50 percent in some verticals, lower R&D overhead for engineering‑heavy teams, and measurable efficiency gains in document review and fraud analysis. The bar is moving from “can it do it?” to “does it do it predictably every week?”

Leaders pushing agents as a CEO‑level priority see faster runway from pilot to production because budget, security reviews, and data access get resolved early, not after a dozen proofs of concept.

Playbook: a 5‑day rollout

- Monday: Scope two tasks, write success metrics, and secure a sandbox with dummy data. Keep the blast radius small.

- Tuesday: Build the agent prompt with explicit steps and constraints; enable approvals before any publish or send action.

- Wednesday: Assemble the multimodal chain and run a full end‑to‑end test, from input to export. Document the happy path and the failure path.

- Thursday: Run both flows on real but noncritical work. Track time saved and issues, then tweak prompts and guardrails.

- Friday: Ship the standard operating procedure with screenshots, owners, and rollback steps. If metrics cleared the bar, scale to a second team.

Creator corner: practical recipes

- Article pipeline: agent drafts outline, multimodal model turns rough cut and captions, then export variants with hook tests. That reduces “blank page” pain and speeds publishing.

- Research post series: agent compiles weekly changes in agents and multimodal launches, writer edits tone and adds anecdotes, multimodal creates charts and alt text in one pass.

Headlines you can use

- This week in AI: agents grow up, multimodal goes default. Short, shareable, and clear on the claim.

- Agents vs multimodal: what shipped and what to use. Puts the reader in a decision seat, which boosts engagement.

Metrics that matter

Retention at 30 seconds on shorts is a clean litmus test, and clickthrough from recap to long article shows whether the promise landed. On the workflow side, watch reduction in manual edits and the ratio of accepted drafts to total drafts. These are practical signals, not vanity numbers.

If the numbers stall, audit prompts and failure logs. Nine times out of ten, the issue is unclear steps or missing constraints. Fix those, then try again.

Where this all goes next

Weekly trackers suggest the next phase is real computer use and better safety defaults, which means more hands‑off execution with fewer gotchas. That arc favours teams that document early and keep feedback tight. Playbooks beat vibes.

Policy will keep nudging vendors toward transparency, so expect safer modes to become table stakes and shadow‑IT experiments to get harder to justify. That’s good for reliability if slightly slower for tinkering.

The bottom line

Adopt one agent workflow and one multimodal routine now, measure honestly, and expand only when the numbers smile back. It’s not about chasing every shiny launch; it’s about picking two that pay rent this week. That’s how momentum compounds.

FAQs

- What is an AI agent? A system that chains steps and tools to achieve a goal autonomously, like turning messy CSVs into clean summaries and draft charts, or turning inbox chaos into labeled replies.

- What is multimodal AI? A model that takes and produces different data types—text, images, audio, and sometimes articles—so one run can handle transcription, analysis, and content generation.

- Where should teams start? Pick one low‑risk agent task in a spreadsheet or doc and one multimodal routine like meeting‑to‑article clips; instrument both with simple metrics.

- How do we measure success? Start with cycle time, error rate, and adoption. If those improve for two weeks, promote the workflow to “standard practice.”

- What about compliance? Favor tools with documented safeguards and incident reporting; confirm vendor policies align with internal guidelines before production.

What is an AI agent?

A system that chains steps and tools toward a goal, such as cleaning a CSV and drafting a report, with minimal oversight. Start in spreadsheets and docs.

What is multimodal AI?

Models that take and produce text, images, audio, and sometimes article, allowing a single run to transcribe, analyze, and generate content.

Where should teams start?

Pick one low-risk agent workflow and one multimodal routine with simple metrics. Prove value in five days.

How do we measure success?

Cycle time, error rate, and adoption. If those improve for two weeks, standardise.

Is this safe for regulated teams?

Better safeguards and transparency are coming online; confirm vendor reporting and add internal approvals.